Fake news and social media posts are such a threat to U.S. security that the Defense Department is launching a project to repel “large-scale, automated disinformation attacks,” as the top Republican in Congress blocks efforts to protect the integrity of elections.

The Defense Advanced Research Projects Agency wants custom software that can unearth fakes hidden among more than 500,000 stories, photos, video and audio clips. If successful, the system after four years of trials may expand to detect malicious intent and prevent viral fake news from polarizing society.

“A decade ago, today’s state-of-the-art would have registered as sci-fi — that’s how fast the improvements have come,” said Andrew Grotto at the Center for International Security at Stanford University. “There is no reason to think the pace of innovation will slow any time soon.”

U.S. officials have been working on plans to prevent outside hackers from flooding social channels with false information ahead of the 2020 election. The drive has been hindered by Senate Majority Leader Mitch McConnell’s refusal to consider election-security legislation.

By increasing the number algorithm checks, the military research agency hopes it can spot fake news with malicious intent before going viral.

The agency added: “These SemaFor technologies will help identify, deter, and understand adversary disinformation campaigns.”

Current surveillance systems are prone to “semantic errors.” An example, according to the agency, is software not noticing mismatched earrings in a fake video or photo. Other indicators, which may be noticed by humans but missed by machines, include weird teeth, messy hair and unusual backgrounds.

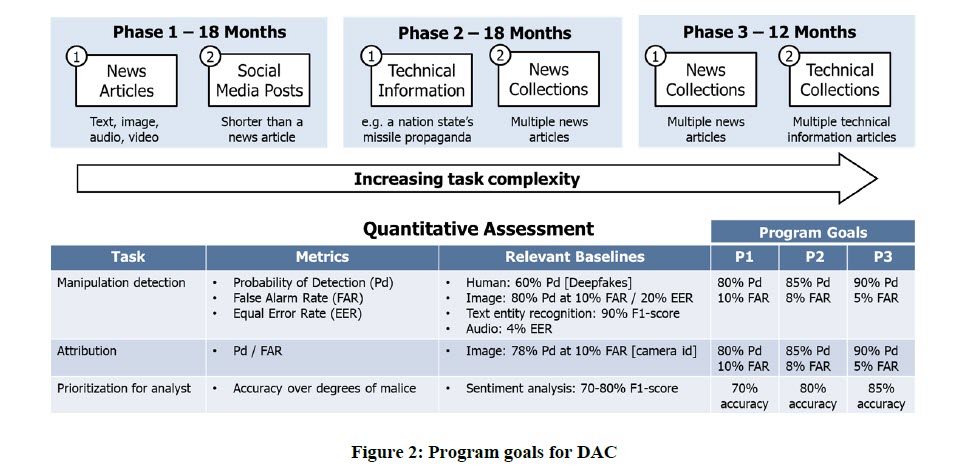

The algorithm testing process will include an ability to scan and evaluate 250,000 news articles and 250,000 social media posts, with 5,000 fake items in the mix. The program has three phases over 48 months, initially covering news and social media, before an analysis begins of technical propaganda. The project will also include week-long “hackathons.”